A Data Availability Committee (DAC) is a solution for scaling the transaction throughput of Tezos Smart Rollups. In summary, a DAC enables storing transaction data for a smart rollup off-chain. Rollup nodes retrieve the transaction payloads from the DAC members and import them into their Smart Rollup virtual machines, instead of retrieving them directly from Tezos blocks, and thus circumvent the data limit imposed by Tezos block sizes. In this article we’ll take a look at the infrastructure built by TriliTech and Marigold engineers to support a DAC. But before delving into the specifics, let’s first understand a bit better how rollups work, to see how they fit in.

Smart Rollups

With the activation of the Mumbai protocol upgrade on Tezos, Smart Rollups are live on Mainnet. A Smart Rollup is an application that is executed off-chain by one or many rollup nodes that periodically submits a hash of the application state on-chain. Anyone can run a smart rollup node and post commitments by locking a bond of 10,000 tez. Furthermore, the Tezos economic protocol provides a mechanism to challenge and disprove fraudulent commitments. Since Smart Rollups execute outside of Layer 1, they have the potential to massively scale the amount of computations involved in running blockchain applications.

There are currently two data sources from which a Smart Rollup can import messages to process: the protocol-wide rollup inbox and the reveal data channel. We’ll cover both of them shortly, but before doing so let’s outline the requirements we’d like to satisfy:

- Integrity: The data imported into a Smart Rollup must be verifiably correct, that is, it is possible to prove that it has not been tampered with.

- Availability: A Smart Rollup must be able to retrieve any message addressed to it. Not being able to do so could hinder the progress of a rollup at best, and could allow a dishonest party to take control of the rollup at worst.

The Rollup Inbox

The rollup inbox is stored in the Tezos blockchain’s context. Users add messages to the rollup inbox via a dedicated manager operation. Rollup nodes then download blocks from the Tezos network to retrieve the contents of the inbox.

The protocol ensures that the data published through the rollup inbox is well-defined, that is there can be no disputes about what messages consist of or in which order they were received. Data availability is also guaranteed by being able to download blocks through a node participating in the Tezos network. However, this method sacrifices scalability since the bandwidth of the rollup inbox is limited by the size of a block (currently 500 KB) and the minimum time it takes to bake a new block (15 seconds at the time of writing). As a result, the bandwidth of the rollup inbox is restricted to around 33 KB/s, which is further shared among all active rollups.

The Reveal Data Channel

To overcome the scalability limitations of the rollup inbox, smart rollups offer an additional source for importing data — via the reveal data channel. This allows rollup kernels — the application logic of a rollup — to request from the rollup node the preimage of a given hash. Such a preimage is called a page and has a size limit of 4KB. The rollup node is expected to provide pages in a fixed location known as the reveal data directory. There is no limit on the number of pages a rollup can request at each Tezos level, ensuring scalability. The integrity of the data is enforced since a preimage is requested through its hash. However, unlike the Smart Rollup’s inbox, the_ reveal data channel_ does not guarantee availability of the data. That is, there are no assurances that a rollup node will have the page corresponding to a given hash when it’s requested. This is precisely the problem addressed by Data Availability Committees.

Data Availability Committees

A DAC consists of a group of parties that commit to storing copies of input data and keeping the data available upon request. Our implementation of DACs:

-

Provides the infrastructure needed to send, distribute and store data of arbitrary size among the DAC members.

-

Enables rollup nodes to download payloads from a DAC and to populate the reveal data directory.

-

Defines a communication pattern that can be used by Smart Rollups to import the data stored by committee members, provided that a sufficient number of committee members have signed their commitment to make a copy of the data available.

It’s important to note that the DAC stack is external to the Tezos economic protocol. That is, the Tezos Layer 1 is not aware of any DAC. The relationship between DACs and Smart Rollups is one-to-many. A DAC can serve multiple rollups while a single rollup must use at most one DAC.

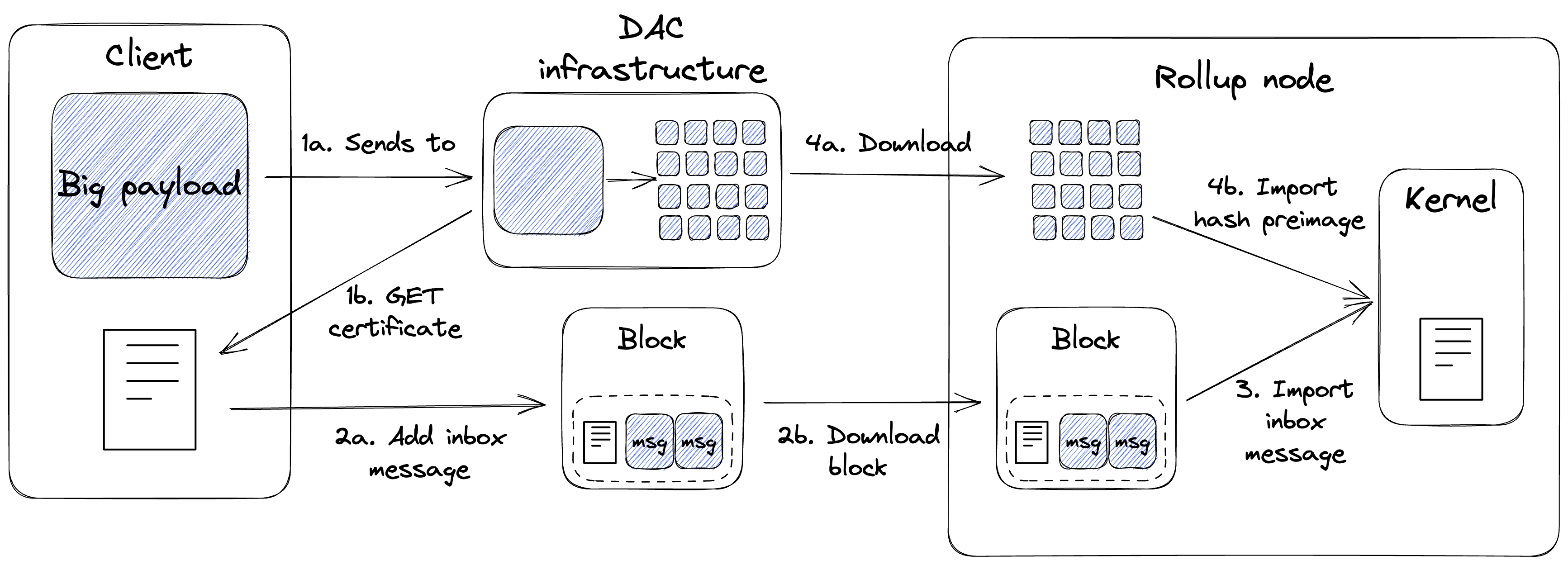

Here’s a high-level description of the workflow:

- The user sends the payload to a DAC (1a) and waits for a certificate with a sufficient number of signatures (1b), as determined by the rollup kernel (the application logic of a particular rollup).

- The certificate, which is small in size (approximately 140 bytes), is posted to the rollup inbox as a Layer 1 message (2a) and will eventually be downloaded by the rollup node (2b).

- The rollup kernel imports the certificate contained in the rollup inbox, and verifies that it contains valid signatures of several committee members. It is the responsibility of the rollup kernel to define the minimum number of signatures required by a certificate to be considered valid,

- If the certificate is deemed valid, the rollup kernel will request to import the pages of the original payload to the rollup node. The rollup node downloads those pages from the DAC infrastructure (4a) before importing them into the kernel (4b).

The rollup kernel must implement the logic to determine if a DAC certificate is valid, and to request the original payload by importing the corresponding pages through the reveal data channel.

Deploying a DAC committee

Following is a brief description of how to set up and deploy a DAC committee for serving data for a Smart Rollup. Note that the software has not yet been released, but it still can be tested on Mondaynet and Nairobinet, after building Octez from sources.

For reference, this blog post uses the current Tezos repository master branch at the time of writing. We plan to publish all the DAC binaries with one of the coming Octez releases.

After building from source, users will find two experimental executables:

./octez-dac-node(the DAC node)./octez-dac-client(the DAC client)

The octez-dac-node executable can be used to set up a new committee

or track an existing one. The octez-dac-client can be used to send

payloads to the DAC for storage, and to retrieve certificates signed

from the data availability committee.

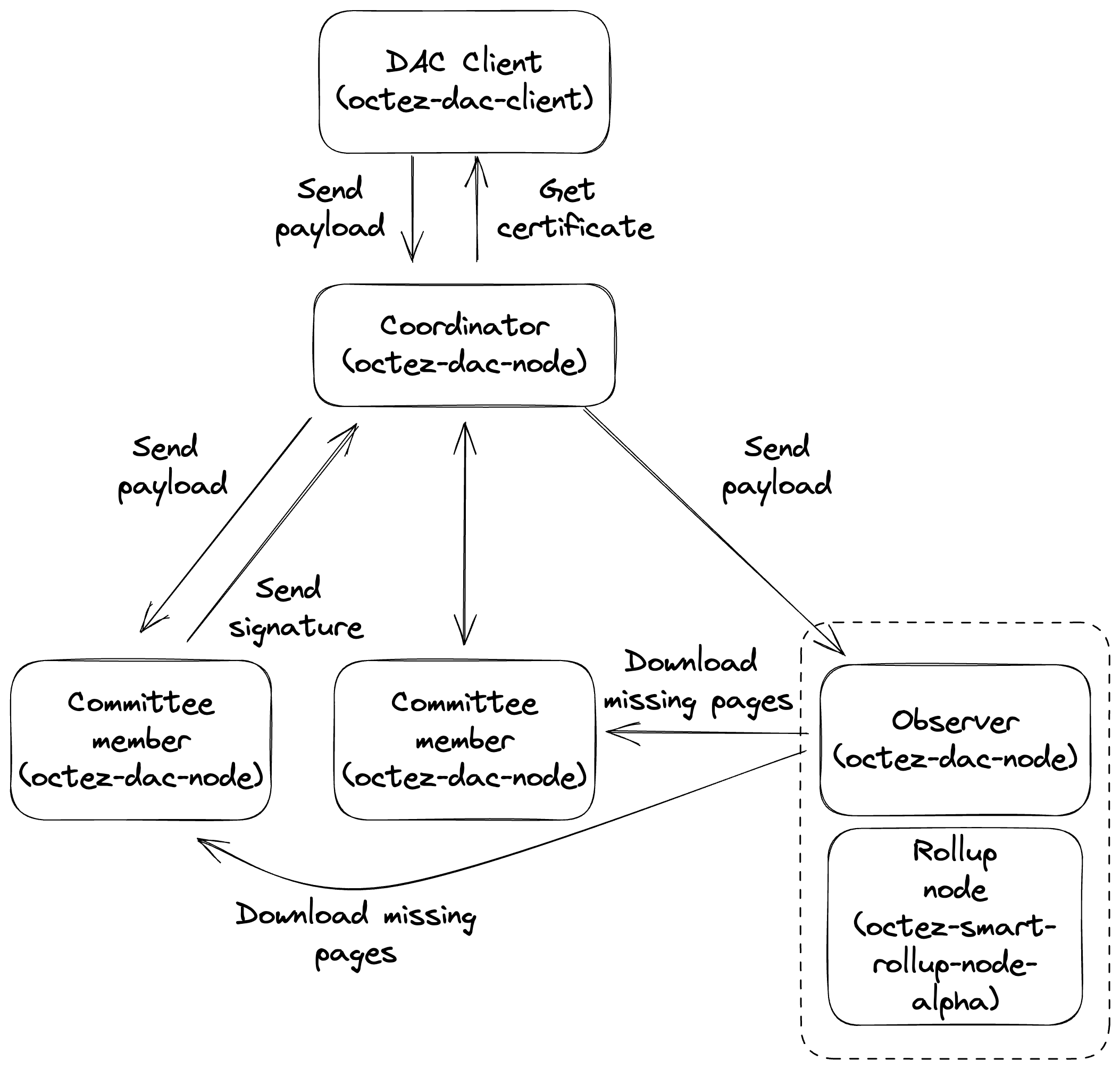

To set up a DAC, several inter-connected instances of the DAC node must be executed. In particular, the DAC node supports three modes of operations: coordinator mode, committee member mode, and observer mode, described next.

Coordinator

The Coordinator acts as a gateway between the clients of the DAC and the other DAC nodes. It is responsible for receiving a payload and splitting it into pages of 4KBs each — the maximum size of a preimage that can be imported into a rollup — and forwarding the resulting pages to other nodes. It is also responsible for providing DAC clients with data availability certificates. A DAC node running in coordinator mode must have access to the public keys of the committee members. A coordinator can be configured with the following command:

./octez-dac-node configure as coordinator with data availability committee members $TZ4_PUBLIC_KEYS --data-dir $DATA_DIR --reveal-data-dir $REVEAL_DATA_DIR

where:

$TZ4_PUBLIC_KEYSis a list of BLS aggregate account (tz4 accounts) public keys.$DATA_DIRis the directory containing the persisted store of the DAC node instance. This argument is optional and will default to~/.octez-dac-nodewhen missing. It is suggested to give it an explicit value in case multiple dac nodes run on the same host.$REVEAL_DATA_DIRis a separate directory where payloads are stored. This argument is also optional.

Once it has been configured, the coordinator can be run with:

./octez-dac-node run --data-dir $DATA_DIR

where $DATA_DIR is the same as for the configuration command

Committee Member

A committee member receives pages from the coordinator and stores them on disk. Once all the pages for the original payload are received, the committee member sends a cryptographic signature to the coordinator to confirm its commitment to storing the data and making it available to external entities upon request. The coordinator collects these signatures and includes them in the data availability certificate for the payload.

To connect with the coordinator, a committee member node needs to use the following command for configuration:

./octez-dac-node configure as committee member with coordinator $COORDINATOR_RPC_ADDR and signer $TZ4_ADDRESS --data-dir $DATA_DIR --reveal-data-dir $REVEAL_DATA_DIR

where,

$COORDINATOR_RPC_ADDRis the RPC address of the coordinator node, in the format{host}:{port};$TZ4_ADDRESSis the address of the tz4 account that will be used to sign commitments to the availability of payloads; and,$DATA_DIRand$REVEAL_DATA_DIRserve the same function as in the coordinator node, but should have different values from the coordinator node if the two run on the same machine.

Observer

Similar to a committee member, an observer node also receives pages from the coordinator and stores them on disk. If the observer is run on the same host machine as a rollup node, and its reveal data directory is set to the same one as on the rollup node, it becomes responsible for providing the pages corresponding to the input payload. To configure an observer you can run the following command:

./octez-dac-node configure as observer with coordinator $COORDINATOR_RPC_ADDR and committee member rpc addresses $COMMITTEE_MEMBE RPC_ADDRESSES --data-dir $DATA_DIR --reveal-data-dir $REVEAL_DATA_DIR

where,

$COMMITTEE_MEMBER_RPC_ADDRESSESis the list of the RPC addresses of the committee member nodes, in the format{host}:{port}.

Retrieving a DAC certificate

Once the DAC infrastructure has been set up, users can request the

committee members to store a payload of arbitrary size via the

octez-dac-client command, by running the following:

./octez-dac-client send payload to coordinator $COORDINATOR_RPC_ADDR with content $PAYLOAD --wait-for-threshold $THRESHOLD

where,

$COORDINATOR_RPC_ADDRis the address of the DAC coordinator, in the form{host_ip}:{port}$PAYLOADis the hex-encoded payload that committee members will store$THRESHOLDis the minimum number of committee members that must commit to make the data available, before the command returns.

Upon executing the command, a hex-encoded data availability certificate is returned, with a size of approximately 140 bytes. This certificate can be posted to the global rollup inbox and will eventually be processed by the rollup kernel.

Next Steps

Moving forward, we aim to enhance DACs to ensure seamless integration with Smart Rollups. Some of our planned improvements include:

-

Introducing new DAC-related functions to the Rollup Kernel SDK.

-

Allowing committee members to commit to data availability for a set period of time.

-

Provide in-depth tutorials demonstrating how to write a rollup kernel that utilizes DACs.

We welcome all community feedback on their experience with DACs to help inform our future efforts, so don’t hesitate to join the conversation at #scoru-ecosystem on the Tezos Dev Slack workspace.